Recall back to Question #13 where we explored how chromaticities are discussed. You may have wondered where that triangle went, and why I removed it in the subsequent diagrams in Question #15.

Why did we have to learn about chromaticities instead of RGB values? Because, as you have hopefully learned from the preceding batch of questions, RGB is a relative encoding model, which means making comparisons becomes extremely confusing! How can we evaluate the Display P3 “green” value on your iPad Pro at 100% emission strength to the “green” value on one of your sRGB device, when the two values expressed in RGB are identical? We can’t!

Guess what? That leads us directly to the subject of our current question…

Question #16: What is the triangle that appears commonly on a chromaticity diagram?

The goal here in this back alley of the interwebs of colour debauchery, is for you, the pixel f*cker1, to completely and utterly comprehend what is presented to you. So, let’s quickly recap a few important details about the CIE 1931 xy chromaticity diagram:

- The diagram is an invented model that lets us connect visible spectral light energy and mixtures to numbers.

- The xy chromaticity diagram uses a warped scale that is disconnected from how we perceive differences in the visible wavelengths of light.

- The diagram’s peculiar curved portion of the shape represents the visible light spectra in nanometers.

- The region outside the spectral locus does not exist in any physically meaningful way. It is only even given a shape due to the artificial model and the representations you have seen.

Let’s have a peek at the above summary on the diagram to reinforce understanding…

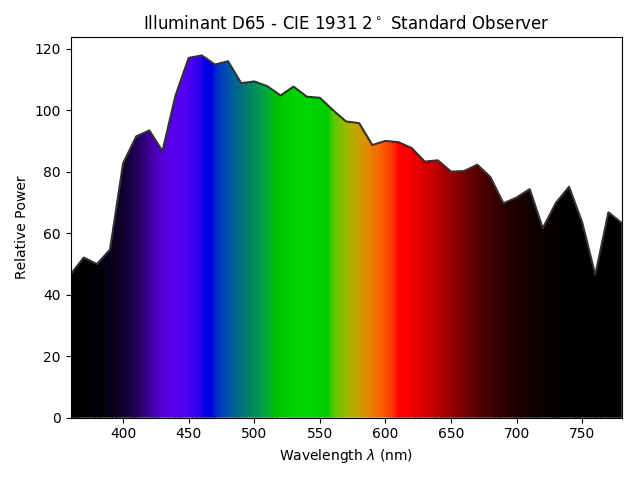

As you can see in FIGURE OMFG NON-PERCEPTUAL SCALE, the line of the visible spectrum wavelengths is not equally and uniformly distributed around the perimeter of the spectral locus; a standard observer does not sense all wavelengths in equal measures, and instead a complex relationship exists between the electromagnetic radiation and the sensation in “standard” perceptual systems. And again, it needs to be stressed that the distances measured along x and y are also not uniform with respect to how we’d perceive the differences in terms of relative perceptual sensations. Everything is warped!

If we recall back to our last question, Question #15, we presented a more useful variation of the original CIE 1931 chromaticity diagram that warps and scales the x and y axes. Let’s snap our fingers and see the above image, with the exact same arrows, warped, skewed, and bent to the perceptually uniform 1976 diagram variant.

As we can see in FIGURE WARPED PERCEPTUAL SCALE, the visible light spectra are still not uniformly distributed around the perimeter of the spectral locus but the chromaticity coordinates on the inside however, are distributed in a roughly perceptually uniform manner. Because this is a modified variation of the xy diagram, the measurements have their labels changed to u’ and v’. They are a different coordinate model.

So now, what about that damn triangle? Something like this one…

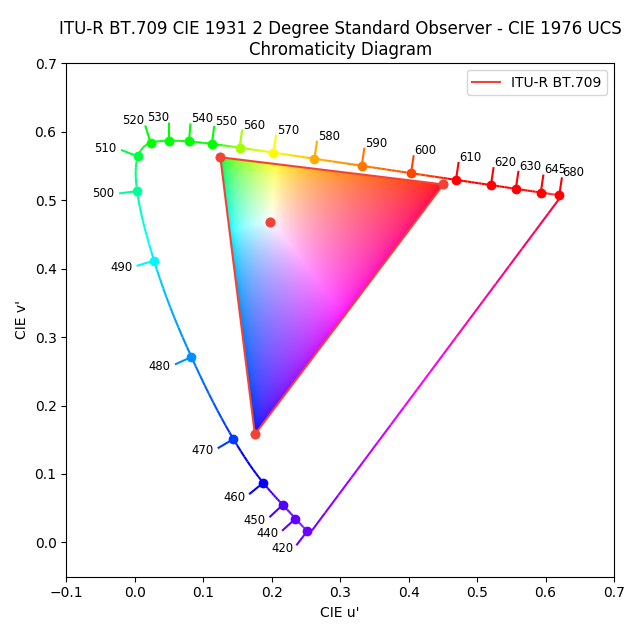

In FIGURE WTF IS THE TRIANGLE, we can see that the red dots at the tips of the triangle, and that off-centre dot inside the triangle, plot four chromaticities. But what exactly are they? The four chromaticities of course, as you may likely have inferred, represent the chromaticities of the three primaries and white chromaticity outlined in the sRGB specification!

That is, if you are staring at a reasonably decent sRGB display, the tips of the triangle represent each of the three colours of the three little teeny lights in each pixel of your sRGB display! The off-centre dot is the chromaticity that an ideal sRGB display outputs when you set the sliders to equal R=G=B values. This chromaticity is sometimes called the “white point”. As we discovered in Question #14, “white” is just another chromaticity.

The straight lines of the triangle depict the line of chromaticities that can be mixed between the two outside primary lights at the tips2, while everything inside the triangle is the footprint of the entire area of chromaticities that can be mixed with the specific colours of three RGB lights. sRGB, and all other types of displays, are limited by chromaticities of their three lights, and no chromaticity can be mixed beyond the limits of the triangle they form on the chromaticity diagrams!

So let’s move onto that iPad Pro…

Remember back in Question #4 where I told you that the “colours” of the three lights in your display are sRGB? Well, I hope our relationship is strong enough now that I can tell you that I lied. At least a little bit.

For the sake of clarity and simplicity, we introduced the complex subject using the generic sRGB standard, as that type of display is extremely common. If you happen to own an iPad Pro or MacBook Pro from 2015 onwards, the colours of the three RGB pixel lights in those particular pieces of hardware are not the same as sRGB!

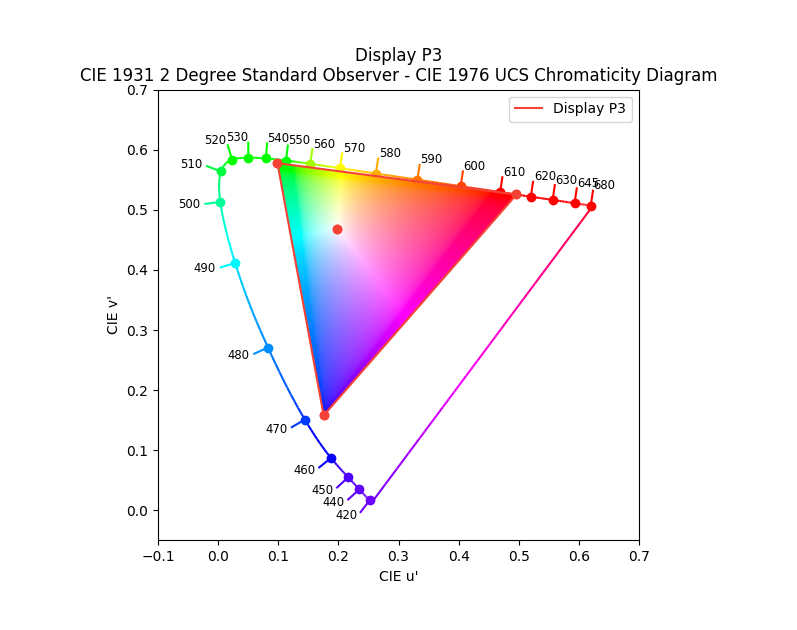

The image FIGURE DISPLAY-WHAT shows us a similar triangle as FIGURE WTF IS THE TRIANGLE, but with different coordinates. The chromaticity coordinates in FIGURE DISPLAY-WHAT represents the Display P3 primaries that are found in most of Apple’s products from 2015 onward, and more and more displays from different vendors. While Display P3 features the identical white point as sRGB, the primaries are more saturated and of different “hue angles”. That is, two of the three primary lights are further out toward the purest spectral locus region, while the blue light is reasonably similar to sRGB’s blue light. They also plot at slightly different angles from the white point as compared to the sRGB primaries. Draw a straight line from the white point to the tip of the respective primary, and you can see how the angle is slightly different.

To get a better sense of these differences in colorimetry, let’s compare them together in one image using the useful perceptually uniform scale.

Recall back to Question #3 where we learned that the chromaticities of each of the three lights in our displays never changes, and only the intensity of emission? The reason that the hardware in a Display P3 device can display more saturated colour mixtures of a wider range is because the chromaticities of the three lights in each of the RGB pixels are quite different from sRGB! To really hammer this home, I’d strongly encourage you to go back and review Question #3 and Question #4 as the answers provided will be more meaningful now with what we have put into place.

And with that, we can answer our question…

Answer #16: The triangles commonly found on chromaticity diagrams plot the chromaticities of the three basis primary lights in a display or other three light RGB standard.

“But wait a minute… what the hell is a gamut?” “Can we deduce the spectral compositions from a single chromaticity?” “How do the sliders behave with different pieces of display hardware?” “I’m a photographer, I shoot real scenes not displays, how does this all relate?” “How does offset and spot printing relate to all of this?”

All terrific questions that will just have to wait a little bit more…

1 I’d like to give full credit to a very large cranium visual effects supervisor slash talented image maker Erik Nordby for coining that turn of phrase. While he has used it as a derogatory term, I feel it adequately describes what many folks who deal with digital content creation do, and have actively repurposed it here as a badge of honour.

2 It might be helpful to think of lights as being points on the chromaticity diagram that “pull” towards themselves like gravity as their emissions grow. At equal energy between two lights on the chromaticity diagrams, the resulting mixture is somewhere between the two. As one of the light’s emission grows, it “pulls” the chromaticity away from the other light, towards itself. If the light has no other light “pulling” away from it, the light is the purest and most heavily saturated it can get. This logic carries on to even the spectral laser-like wavelengths represented around the perimeter of the spectral locus! The wavelength composition of D65 daylight, for example, could be though of existing as a bunch of visible spectra under tension between each other, with the relative power “pulling” toward the spectral locus curve to greater or lesser degrees.

2 replies on “Question #16: What the F*ck is the Triangle Thingy in the Chromaticity Diagram?”

I think I am missing something about the differences between displays. If the only physical light is the one described in the different wavelengths of the spectral locus, the single LED can only produce light of a color within those wavelengths and never on the inside region as that is just the result of mixing 3 LED of different wavelengths. In the sRGB, DCI-P3 triangles (as in many others), the 3 primaries are always in the area inside the spectral locus, thus meaning (unless I am missing something, which is very possible) that in those triangles the purest primaries never turn off completely the LEDs of the other two primaries; the purest green never has the red and blue LED turned off, as its value is not in the spectral locus but inside, meaning that is still in some extent a mix of the 3 LEDs. Why is that? If you can buy on amazon a 560nm LED, shouldn’t the displays set its primaries right in the spectral locus, with the highest purity? The answer is clearly no, as most don’t have pure primaries, but I can´t figure out the reason. Does it depend on the maximum nits of the display? Are there changes on the LED type from one display to another? Or where did I get lost?

I have read the next two chapters and went back to the previous ones but I haven´t been able to answer this question so I am asking it here, feel free to redirect me to another of the questions if I have missed something or I have been too impatient.

Thank you very much for all the work in these posts, they are pure gold.

LikeLike

You are quite right in your general inference, with one slight modification.

The primaries are spectral *combinations* themselves. They are *not* pure, sharp 1nm spectra for example, but rather a blended mixture that is often undisclosed.

So while you are quite correct that for every value within the footprint, the opponent emissions would remain on, the “purest” form is always with a channel totally off. This is because, again, the “purity” of the channels in isolation is not a spectrally sharp emitter source of some thin range.

As for your question as to “Why”, the reason is that manufacturing emission at sharp spectra at that size is extremely expensive, and there is a very real thermal issue.

Consider the flicker photometry based luminance, or **loosely** an approximation of “brightness”, for a deep short wavelength blue. As we move *outward* to the locus, the amount of “brightness” we detect grows lower. Therefore, to make a display of any use, we would need to pump a *tremendous* quantity of energy through those diodes.

We aren’t quite there yet, and frankly, our entire image formation chain is likely so wonky as to make it less than useful anyways.

Hope this helps.

LikeLike